AI has transformed software development. Code completion predicts what you will type next. AI reviewers catch bugs before human review. Test generation covers edge cases you might miss. Documentation updates happen automatically as code changes. This is not science fiction; it is the current state of the art for teams using AI-assisted development workflows.

But integrating AI effectively requires more than installing tools. It requires understanding where AI helps and where it hinders. It requires maintaining code quality when AI generates code. It requires verifying AI suggestions rather than accepting them blindly. It requires updating workflows and team practices to incorporate AI assistance.

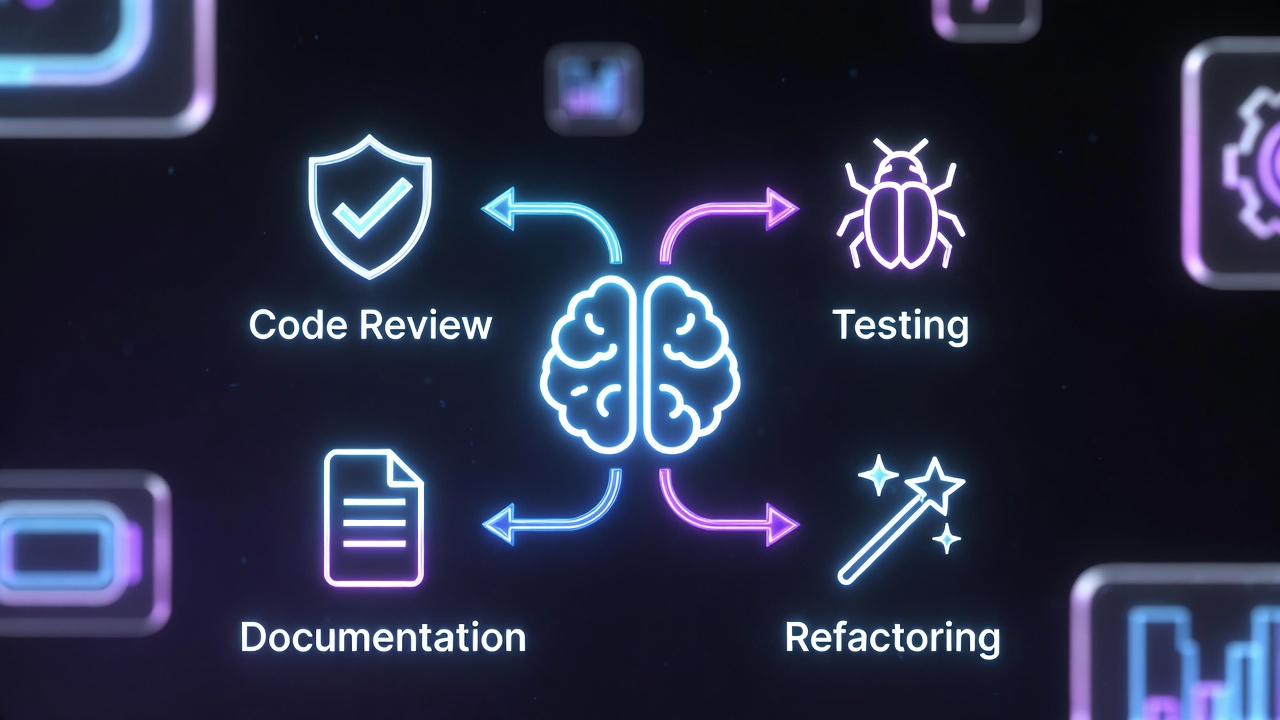

I have integrated AI tools into development workflows across multiple teams. I have learned that AI excels at pattern recognition and boilerplate but struggles with architectural decisions. I have seen teams double their velocity with AI assistance and others create technical debt by accepting every suggestion. This guide covers the patterns that work: AI-powered code review that catches issues early, automated test generation that increases coverage, documentation maintenance that stays synchronized, refactoring assistance that accelerates modernization, and integrating AI tools into existing workflows.

(Want to cut the API costs of these workflows? Read our complete guide to reducing AI coding tool token usage)

The AI-Assisted Development Stack

Current Capabilities

Code completion: Predict and generate code as you type Code review: Automated review for common issues Test generation: Create unit tests from code Documentation: Generate and update docs from code Refactoring: Suggest and apply transformations Debugging: Explain errors and suggest fixes

Tool Landscape

Code completion: GitHub Copilot, Cursor, Amazon CodeWhisperer Code review: GitHub Copilot Review, Amazon CodeGuru, SonarQube with AI Test generation: CodiumAI, GitHub Copilot Chat Documentation: Mintlify, ReadMe.com AI, custom LLM pipelines Refactoring: Sourcegraph Cody, Continue.dev

AI Code Review

Automated Review Pipeline

# .github/workflows/ai-review.yml

name: AI Code Review

on: [pull_request]

jobs:

ai-review:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

with:

fetch-depth: 0

- name: AI Code Review

uses: ai-reviewer-action@v1

with:

github-token: ${{ secrets.GITHUB_TOKEN }}

openai-api-key: ${{ secrets.OPENAI_API_KEY }}

review-level: 'detailed'What AI Reviewers Catch

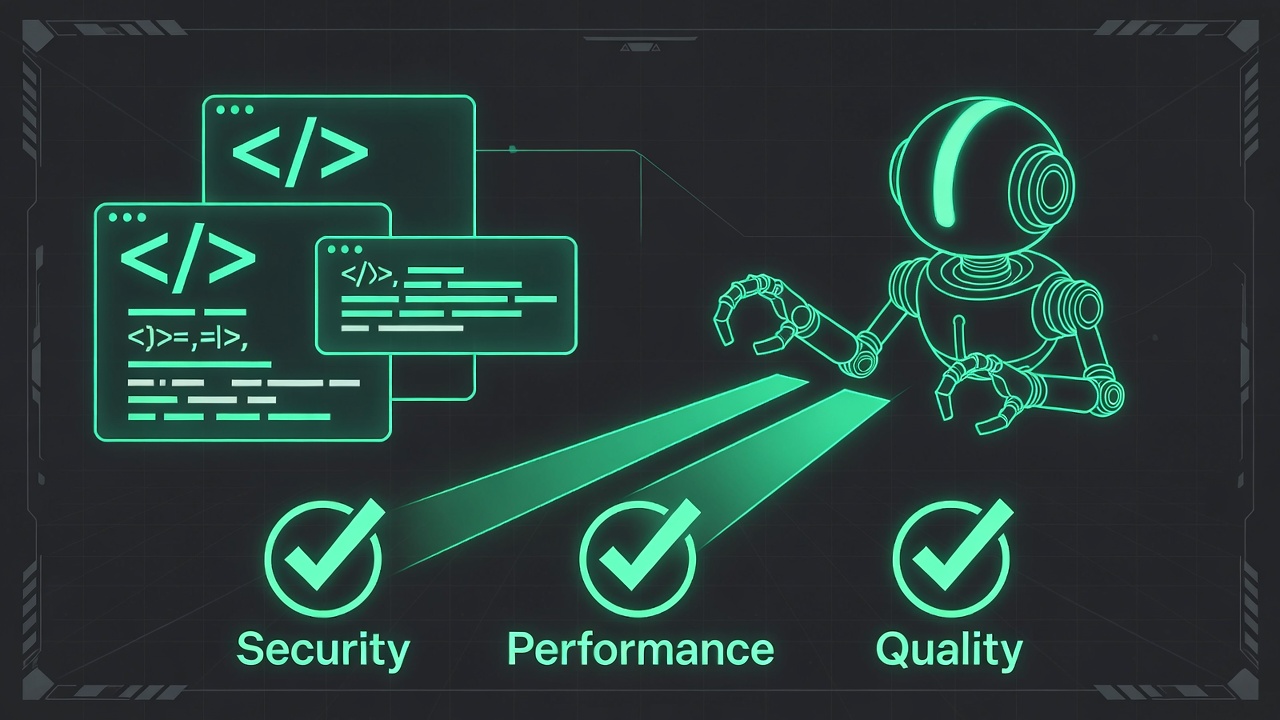

Security issues:

- SQL injection vulnerabilities

- Hardcoded secrets

- Unsafe deserialization

- XSS vulnerabilities

Performance problems:

- N+1 queries

- Unnecessary computations

- Memory leaks

- Inefficient algorithms

Code quality:

- Unused imports

- Dead code

- Complex functions

- Missing error handling

Implementation Example

# AI review script

import openai

import difflib

def ai_review_diff(diff: str) -> list[dict]:

prompt = f"""Review this code diff for issues:

{diff}

Check for:

1. Security vulnerabilities

2. Performance problems

3. Logic errors

4. Code smells

Return findings as JSON array:

[{{

"line": number,

"severity": "high|medium|low",

"category": "security|performance|logic|quality",

"message": "description",

"suggestion": "recommended fix"

}}]"""

response = openai.chat.completions.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}],

response_format={"type": "json_object"}

)

return json.loads(response.choices[0].message.content)

# Post as PR comments

for finding in findings:

post_pr_comment(

path=file_path,

line=finding['line'],

body=f"**{finding['category'].upper()}: {finding['severity']}**\n\n"

f"{finding['message']}\n\n"

f"**Suggestion:** {finding['suggestion']}"

)Combining with Human Review

AI as first pass:

- AI reviews immediately on PR open

- Addresses trivial issues automatically

- Flags issues for human attention

Human focuses on:

- Architecture decisions

- Business logic correctness

- Maintainability concerns

- Knowledge sharing

Test Generation

Automated Unit Test Creation

# CodiumAI example

def calculate_discount(price: float, customer_type: str) -> float:

"""Calculate discount based on customer type."""

if customer_type == "premium":

return price * 0.8

elif customer_type == "regular":

return price * 0.95

else:

raise ValueError(f"Unknown customer type: {customer_type}")

# AI generates:

import pytest

def test_calculate_discount_premium():

assert calculate_discount(100.0, "premium") == 80.0

def test_calculate_discount_regular():

assert calculate_discount(100.0, "regular") == 95.0

def test_calculate_discount_unknown_type():

with pytest.raises(ValueError, match="Unknown customer type: invalid"):

calculate_discount(100.0, "invalid")

def test_calculate_discount_zero_price():

assert calculate_discount(0.0, "premium") == 0.0

def test_calculate_discount_negative_price():

# Edge case: should handle or reject negative prices

with pytest.raises(ValueError):

calculate_discount(-10.0, "premium")Property-Based Test Generation

# AI generates property-based tests

from hypothesis import given, strategies as st

@given(st.floats(min_value=0), st.sampled_from(["premium", "regular"]))

def test_discount_never_exceeds_original(price, customer_type):

"""Discount should never make price higher"""

result = calculate_discount(price, customer_type)

assert result <= price

assert result >= 0

@given(st.floats(min_value=0))

def test_premium_always_cheaper_than_regular(price):

"""Premium discount should be better than regular"""

premium = calculate_discount(price, "premium")

regular = calculate_discount(price, "regular")

assert premium <= regularIntegration Test Generation

# Generate integration tests from API specs

openapi_spec = load_openapi_spec()

for endpoint in openapi_spec['paths']:

test_code = ai_generate_test(

endpoint=endpoint,

spec=openapi_spec['paths'][endpoint],

framework="pytest",

style="arrange-act-assert"

)

write_test_file(endpoint, test_code)Documentation Maintenance

Auto-Generated API Docs

# Generate OpenAPI from code annotations

from flask import Flask

from flasgger import Swagger

app = Flask(__name__)

Swagger(app)

@app.route('/users', methods=['POST'])

def create_user():

"""

Create a new user

---

post:

summary: Create user

requestBody:

required: true

content:

application/json:

schema:

type: object

properties:

email:

type: string

name:

type: string

responses:

201:

description: User created successfully

"""

# ImplementationCode Comment Generation

# AI generates docstrings

def complex_algorithm(data: list[dict]) -> dict:

# AI analyzes and generates:

"""

Aggregate transaction data by category and calculate statistics.

Args:

data: List of transaction dictionaries with 'category' and 'amount' keys

Returns:

Dictionary mapping category to statistics dict with 'total',

'average', and 'count' keys

Raises:

ValueError: If data contains negative amounts

Example:

>>> data = [

... {"category": "food", "amount": 10.50},

... {"category": "food", "amount": 25.00}

... ]

>>> complex_algorithm(data)

{"food": {"total": 35.50, "average": 17.75, "count": 2}}

"""

# ImplementationREADME Maintenance

# GitHub Action to update README

name: Update Documentation

on:

push:

paths:

- 'src/**'

- 'api/**'

jobs:

update-docs:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Generate updated README

run: |

python scripts/generate_readme.py

- name: Commit changes

run: |

git config --local user.email "[email protected]"

git config --local user.name "GitHub Action"

git diff --quiet && git diff --staged --quiet ||

(git add README.md && git commit -m "docs: Auto-update README" && git push)Refactoring Assistance

Pattern-Based Refactoring

# Before: AI identifies pattern

users = []

for user in database.query(User).all():

if user.is_active:

users.append(user)

# AI suggests:

users = [user for user in database.query(User).all() if user.is_active]

# Or better (database-level filtering):

users = database.query(User).filter(User.is_active == True).all()Modernization

# Legacy callback pattern

process_data(data, callback=handle_result)

# AI suggests async/await

async def process():

result = await process_data(data)

await handle_result(result)Type Annotation Addition

# AI adds type hints

def calculate(a, b, operation):

if operation == "add":

return a + b

# AI generates:

from typing import Literal

def calculate(

a: float,

b: float,

operation: Literal["add", "subtract", "multiply", "divide"]

) -> float:

if operation == "add":

return a + b

elif operation == "subtract":

return a - b

# ...Workflow Integration

Pre-Commit Hooks

# .pre-commit-config.yaml

repos:

- repo: local

hooks:

- id: ai-lint

name: AI Code Review

entry: python scripts/ai_lint.py

language: python

types: [python]

- id: ai-test-gen

name: AI Test Generation

entry: python scripts/ai_generate_tests.py --check

language: python

types: [python]IDE Integration

Cursor IDE workflow:

- Write high-level comment describing intent

- AI generates implementation

- Review and refine

- AI generates tests

- Run and iterate

GitHub Copilot Chat:

/explain - Explain selected code

/fix - Suggest fix for error

/tests - Generate tests

/docs - Generate documentationCI/CD Integration

# .github/workflows/ai-enhanced.yml

name: AI-Enhanced CI

on: [push]

jobs:

ai-checks:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: AI Security Scan

run: |

python scripts/ai_security_scan.py --fail-on-high

- name: AI Test Coverage Check

run: |

python scripts/ai_suggest_missing_tests.py

- name: AI Documentation Check

run: |

python scripts/ai_check_docs.pyBest Practices

Verification Required

Always review AI output:

- Security implications

- Business logic correctness

- Performance characteristics

- Edge cases

Red flags:

- Generated code that looks plausible but is wrong

- Missing error handling

- Hardcoded values that should be configurable

- Over-engineered solutions

Code Quality Standards

AI-generated code must pass:

- All existing linting rules

- Type checking

- Unit tests

- Security scanning

Do not lower standards for AI code.

Knowledge Preservation

Document AI decisions:

# AI-assisted refactoring: Converted from callbacks to async/await

# Date: 2026-04-10

# Rationale: Improve readability and error handling

# Reviewed by: @usernameGradual Adoption

Start with:

- Code completion for boilerplate

- Documentation generation

- Test generation for new code

- Code review assistance

Expand to:

- Refactoring assistance

- Legacy code modernization

- Architecture suggestions

Common Pitfalls

Pitfall 1: Blind Acceptance

Accepting all AI suggestions without review. Always verify.

Pitfall 2: Skill Atrophy

Developers forgetting how to code without AI. Maintain fundamental skills.

Pitfall 3: Over-Reliance on Boilerplate

AI generates repetitive code instead of abstracting. Review for refactoring opportunities.

Pitfall 4: Security Blind Spots

AI may generate vulnerable code. Security review remains essential.

Pitfall 5: Context Loss

AI lacking full project context produces suboptimal solutions. Provide context in prompts.

Pitfall 6: No Attribution

Not tracking what code is AI-generated. Document AI assistance for accountability.

Conclusion

AI-assisted development increases productivity and code quality when used thoughtfully. Let AI handle repetitive tasks: boilerplate, documentation, test scaffolding. Keep human judgment for architecture, security, and business logic.

Integrate AI tools into existing workflows through pre-commit hooks, CI pipelines, and IDE extensions. Maintain code quality standards. Verify AI output before committing.

The goal is not AI replacement of developers but AI amplification of developer capabilities. Teams that master this partnership ship faster with higher quality.

Further Reading

- GitHub Copilot Documentation: IDE integration patterns and features

- Cursor Documentation: Mastering AI-native editor workflows

- OpenAI Prompt Engineering Guide: Strategies for effective AI interaction

- Microsoft AI Productivity Studies: Research on how AI coding tools impact developer velocity

- Martin Fowler on AI-Assisted Programming: Engineering practices for working with LLMs